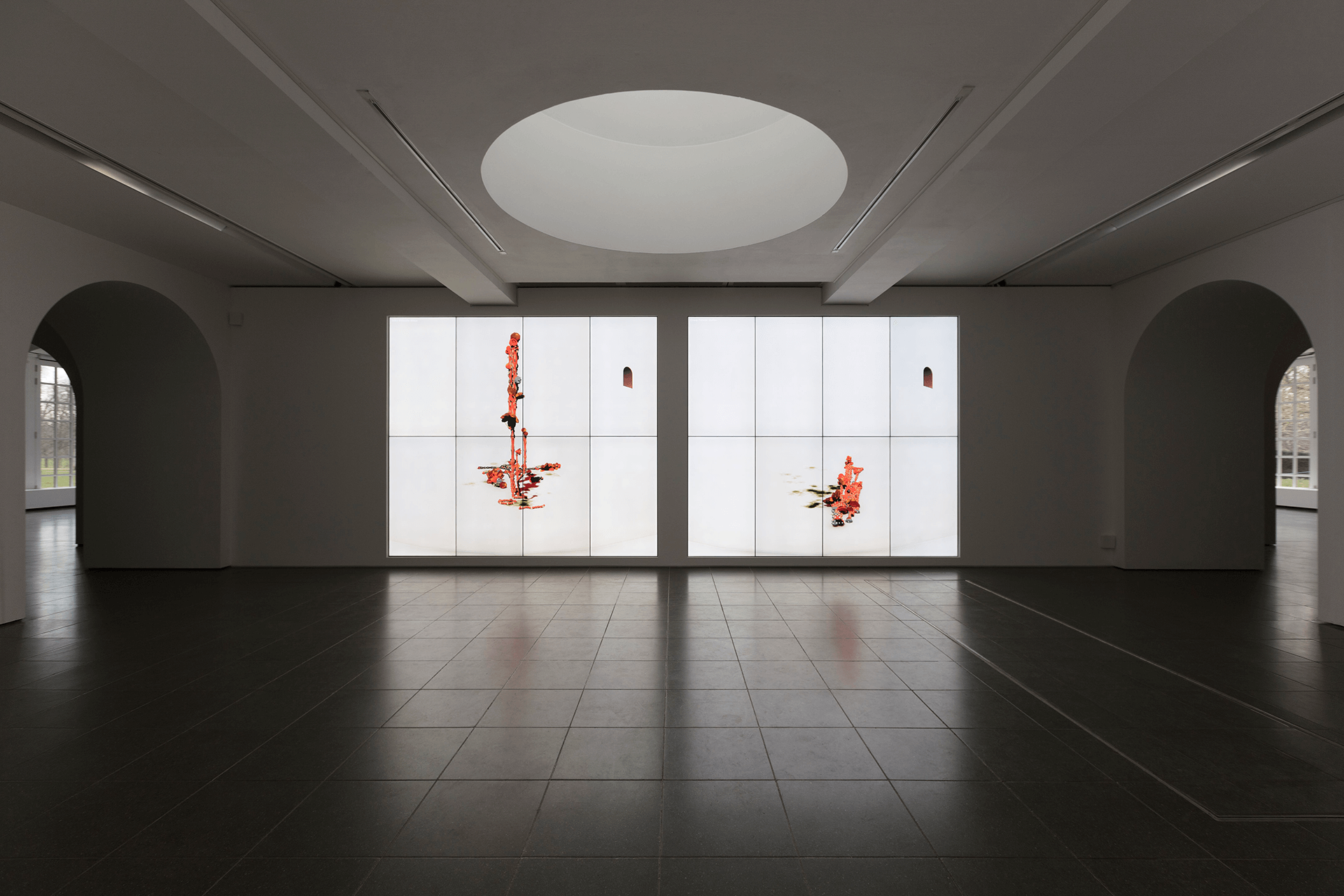

Ian Cheng: BOB (2018)

INSTALLATION // ART // AR // SERPENTINE // INTERACTIVE

Real-Time Tracking and Emotional Interaction

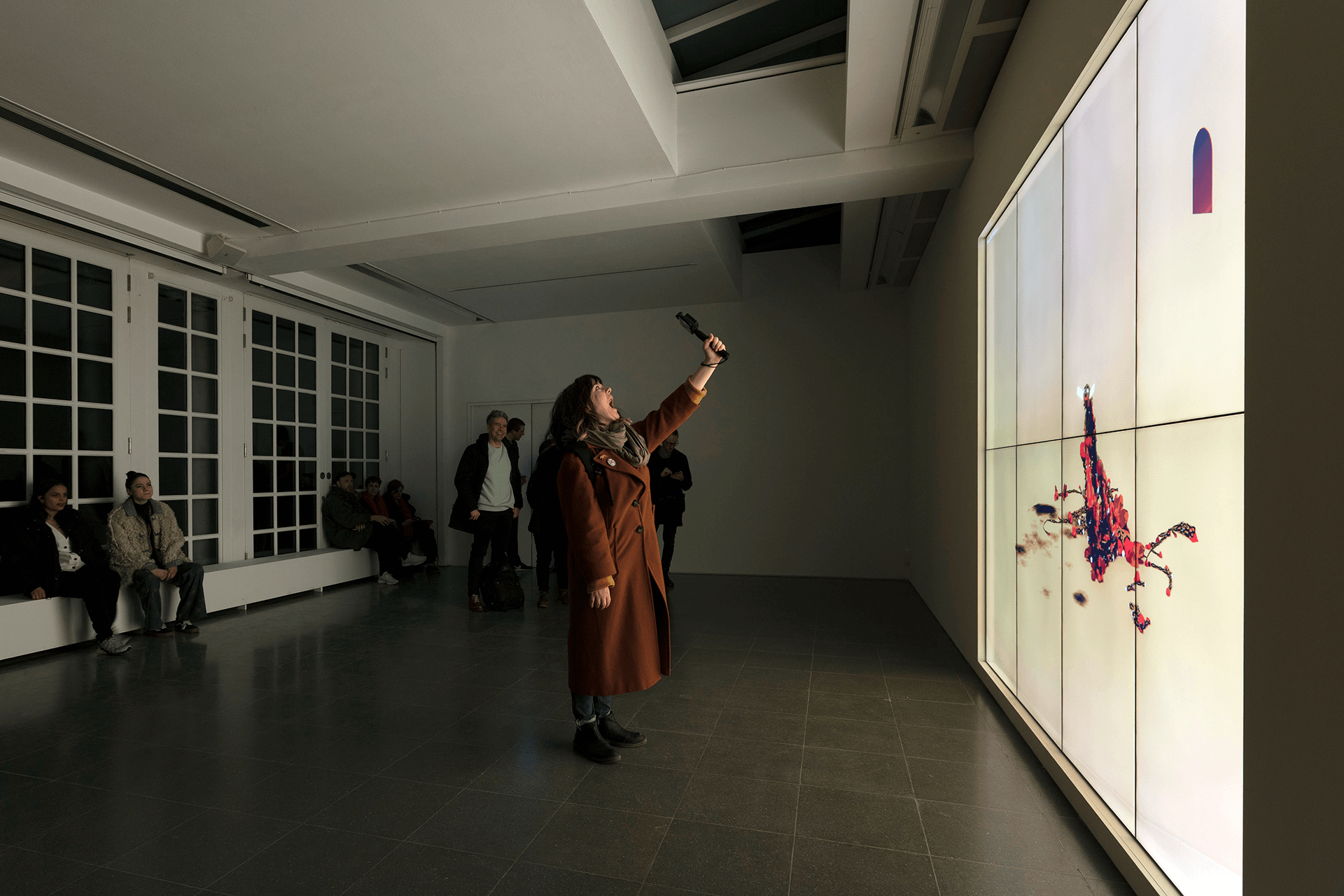

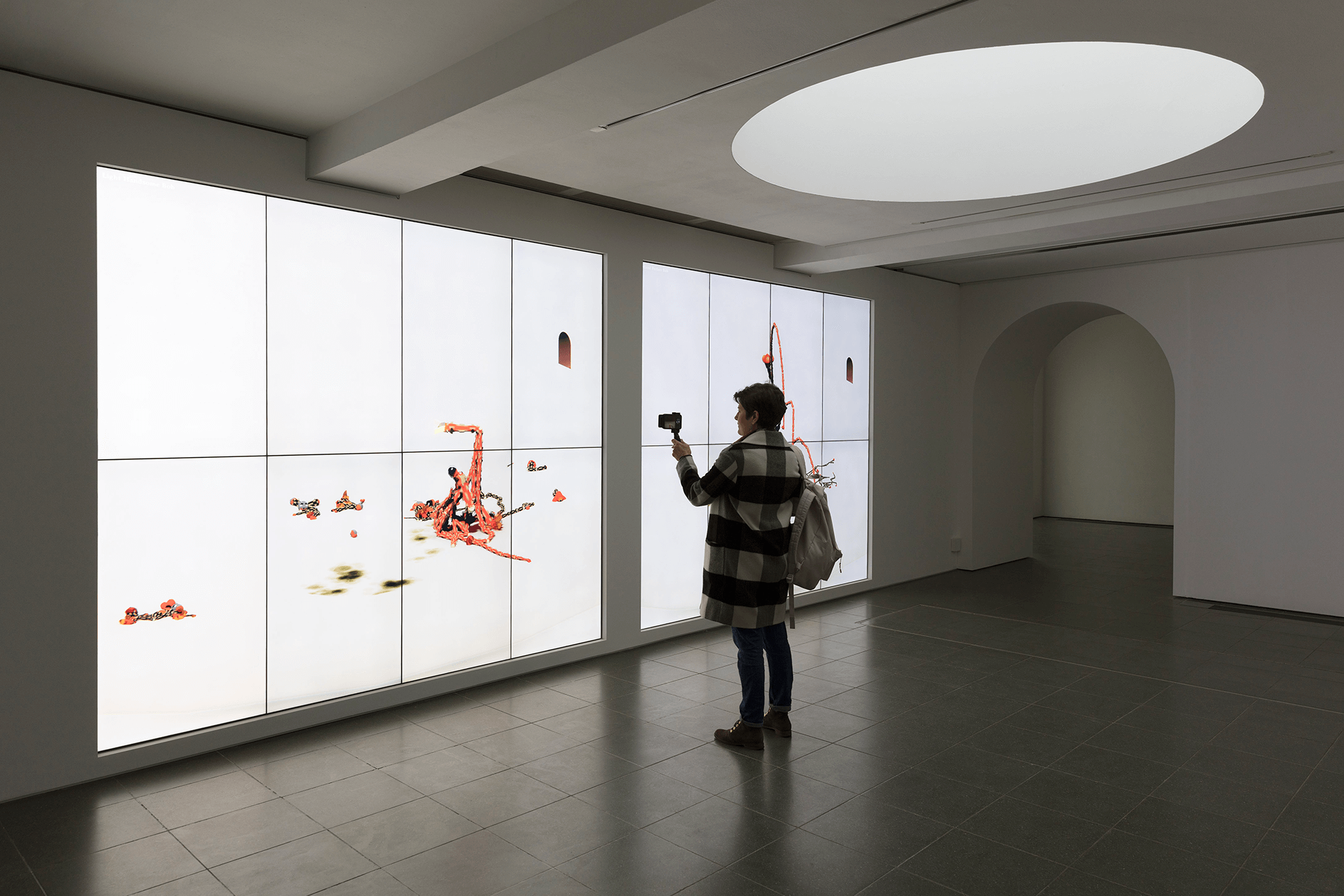

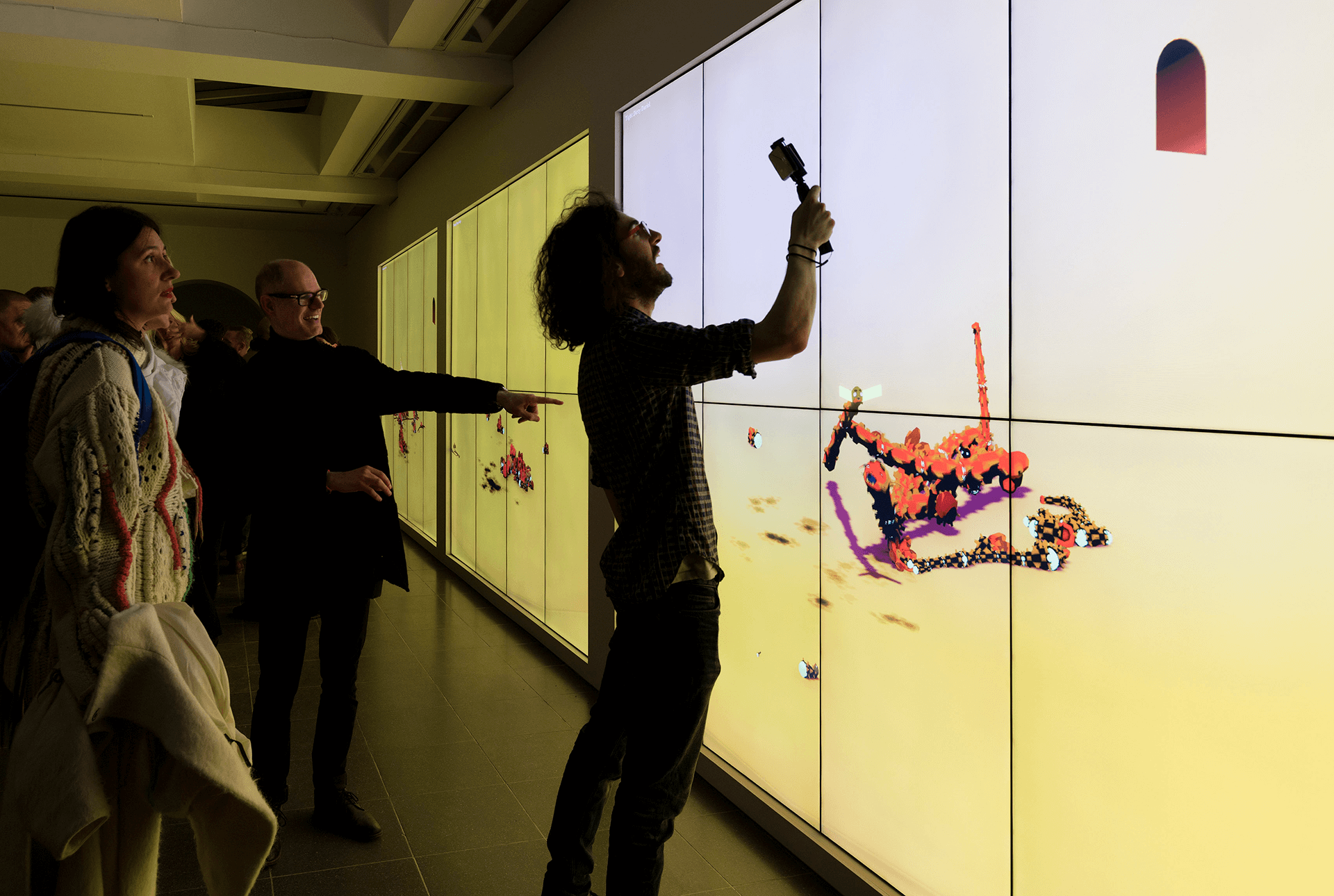

Ian Cheng - BOB - 2018

BOB stands for Bag of Beliefs. BOB is an artificial lifeform who enjoys the thrill of making his own path, and the growing pains of developing a generative attitude towards living. BOB's experiences solidify into personality traits and patterns of growth. The life story of BOB is persistent, irreversible, aging, and consequential. Yet, when conditions are ripe, BOB may engage in activities powerful enough to change its mind and reshape its body.

Ian Cheng’s work explores mutation, the history of human consciousness and our capacity as a species to relate to change. Drawing on principles of video game design, improvisation and cognitive science, Cheng develops live simulations – virtual ecosystems of infinite duration, populated with agents who are programmed with behavioral drives but left to self-evolve without authorial intent. Cheng was interested in exploring ways in which these simulations could become interactive and open to the influence of the viewers themselves.

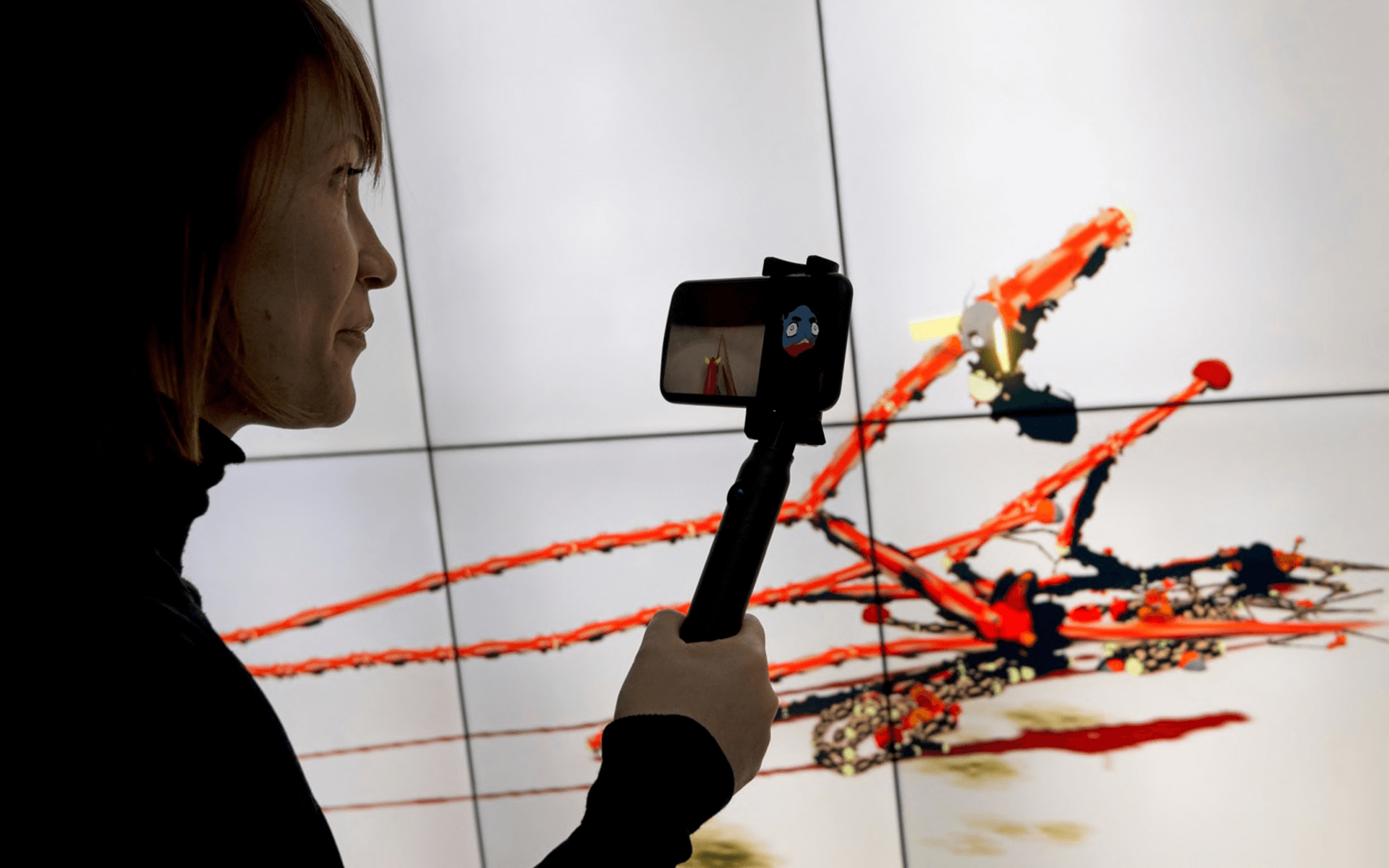

Luxloop was approached by Cheng to develop the tracking and interaction systems for his show at The Serpentine Gallery in London. The intent was to develop a system that would enable visitor to interact with affect BOB's AI.

Featured on: The Telegraph Vice Londonist

Creating Presence in BOB's World

This physical presence is further reinforced through the POV inside BOB's enclosure that is streamed back to the iPhone X in realtime. Luxloop developed a custom solution for compressing and streaming the virtual camera feed in BOB's world to the connected smartphone, as well as sending information back to BOB.

Understanding Emotional Cues